AI Tokens are the new currency of AI and has become one of the most important topic of discussion today as AI apps have shifted to use Tokens as a measure of work. Copilot quietly changed its pricing model and suddenly “tokens” are front and centre. Let’s read and understand how to save AI Tokens, before it costs you.

Every word you type, every response you read, every file you paste into a chat — it all burns through AI Tokens. The good news is that with a few simple habits, you can dramatically cut how many tokens you burn without getting any less value from your AI tools.

But before diving into the tips, there’s one concept worth understanding first: context.

Every AI chat has a context window — the working memory it holds during a session. The catch is that the entire conversation history gets resent to the AI with every single message you send. A chat that starts at a few hundred tokens quietly grows into thousands as the session goes on. Most of that accumulated history is old, resolved, or irrelevant — but the AI processes it all anyway, every time.

This is why context management is the biggest lever you have on token usage. The tips in this article are really just different ways to keep context lean, relevant, and under your control.

The most structured approach is a three-layer file system that carries only the right context into each session — and nothing more:

| File | Purpose | When to load |

|---|---|---|

CLAUDE.md / context file | Who you are, your preferences, standing instructions | Every session |

memory.md | Project progress, decisions made, what’s next | Every session for that project |

| Skill files | How to do one specific task, your standards for it | Only when running that task |

Each layer targets a different kind of context waste. Together they mean you spend almost nothing on setup and almost everything on actual work. The rest of this article explains each one — plus eight other habits that compound the savings. Here’s exactly how to do that.

Table of Contents

What Are AI Tokens, and Why Should You Care?

Tokens are the chunks that AI models use to read and generate text. One token is roughly four characters, or about three-quarters of a word in English. The sentence you just read consumed around 25 tokens.

Every message in a conversation — yours and the AI’s — counts towards a running total called the context window. Think of it as the AI’s working memory. Claude, ChatGPT, Copilot, Gemini — they all have a limit on how much they can hold at once. Once you hit that limit, older parts of the conversation start to drop off, or you hit a hard wall.

Microsoft is now rolling tokens into Copilot’s pricing model, making them visible and finite for business users. That means being token-aware isn’t just good practice — it directly affects your budget.

How Tokens Actually Get Used in a Conversation

Most people assume only their messages count. In reality, every AI response is token consumption too. Longer replies cost more than short ones.

There’s another hidden cost: the entire conversation history is re-sent to the AI with every message. A chat that started at 200 tokens grows to 2,000 tokens after a dozen back-and-forth exchanges. By the time you’re deep into a long session, the AI is processing thousands of tokens just to answer a simple follow-up question.

This is why long, sprawling conversations are the single biggest source of unnecessary token use.

Start a New Chat for Each New Topic

This is the highest-impact habit you can build. One topic per conversation. Full stop.

When you keep adding unrelated questions to an existing chat, the AI carries the entire history of that thread into every new response. It re-reads your earlier discussion about recipe ideas while trying to help you debug a spreadsheet formula. That’s wasteful and it also degrades response quality, because the AI has less “room” to think about the thing you’re actually asking now.

A fresh chat starts with zero history, zero baggage, and a full context window. Use it freely.

Keep Your Prompts Tight and On-Topic

Long, rambling prompts don’t produce better answers — they produce longer, less focused ones answers. And then you use more tokens asking follow-up questions to clarify what you actually wanted.

Write your prompt like a brief to a contractor. State the task, the format you want, and any constraints. Cut the small talk and the background story unless the AI genuinely needs it.

Compare these two:

- “Hey so I’ve been thinking about this thing with my website and I guess I need some help, I’m not sure what the best approach is but basically I want to add a contact form…”

- “Add a simple contact form to my website. Fields: name, email, message. No CAPTCHA. Return HTML and CSS only.”

The second prompt is shorter, gets a tighter answer, and you’ll spend far fewer tokens on follow-ups.

Use a CLAUDE.md or Copilot Instructions File to Share Context Between Sessions

Here’s the problem with starting fresh chats: you lose context every time. The AI doesn’t remember your project structure, tech stack, your writing style, or the decisions you made last week.

The solution is a persistent context file — a plain text or Markdown file you keep updated and paste at the start of relevant sessions. Different tools have different conventions for this:

- Claude users often create a

CLAUDE.mdfile — a concise document that describes who you are, what project you’re working on, your preferred response style, and any standing rules (e.g. “always give code in Python 3”, “assume I’m using Ubuntu”). - GitHub Copilot supports a

COPILOT-INSTRUCTIONS.mdfile (or.github/copilot-instructions.mdin a repo) that gives the AI persistent context about your codebase, conventions, and preferences. - ChatGPT users can use the “Custom Instructions” feature or paste a structured context block at the session start.

The key is keeping the file short and current. A 200-word context file that’s accurate beats a 2,000-word dump that’s six months out of date. Include only what’s stable: your role, your tools, your preferred output format, and any project-specific facts the AI needs every time.

When you start a new chat, paste the file once at the top. That single paste replaces hundreds of tokens of re-explanation you’d otherwise spend across the session.

Two standing instructions worth adding to every context file:

The first controls output format: “Default all outputs to Markdown format. Only generate Word, Excel, or PDF files when I specifically ask for them.” This prevents the AI from defaulting to token-heavy file generation when plain text would do — you’ll see exactly why in the next section.

The second controls response length: “Keep all responses concise and direct. Skip explanations and reasoning unless I ask for them — if I need detail, I’ll ask.” This is a significant token saver. AI models naturally default to thorough, explained answers — they justify reasoning, add caveats, and walk through their thinking step by step. That’s useful sometimes, but when you just need the output, all that explanation is tokens you’re paying for and skimming past. With this instruction in place, the AI delivers the result first. Reasoning is still available — just follow up with “why?” or “explain that” — but it only appears when you actually want it.

Use Markdown for Drafts — Generate Files Only When You’re Ready

Asking the AI to produce a Word document, an Excel spreadsheet, or a PDF isn’t the same as asking for a text response. Generating a formatted file requires significantly more tokens than producing the same content as plain Markdown — the AI has to construct the file structure, encode formatting, handle styling, and in some cases run code to build the output. That overhead adds up fast.

Here’s the smarter workflow: get your content right in Markdown first, then convert to a file once you’re satisfied.

Markdown is plain text with lightweight formatting — headings, bold, tables, bullet lists — and it renders cleanly in almost every tool. More importantly, it’s cheap to generate and easy to feed back into a future AI session if you need to continue working on it. A Markdown document you save to your desktop is a perfectly re-usable context file for your next chat.

Only ask for a .docx, .xlsx, or .pdf when the output is final and you genuinely need that format — to email a client, submit a form, or hand off to a colleague who doesn’t use Markdown.

The practical rule: draft in Markdown, export to file format once.

Don’t Paste Raw Documents — Summarise First

Pasting a 10-page PDF or a long email thread directly into a chat burns enormous tokens, and most of that content is irrelevant to what you’re actually asking.

Instead, extract only the relevant section before pasting. If you need the AI to reply to an email, paste the specific paragraph that requires a response — not the entire thread. If you’re working from a document, paste the relevant clause, not the whole file.

When you do need the AI to process a large document, ask it to summarise first, then ask follow-up questions based on the summary. You’ll use a fraction of the tokens and often get sharper answers.

Ask for Shorter Outputs When Length Doesn’t Matter

AI models default to thorough. That’s usually good — but when you just need a quick answer, a snippet of code, or a single headline, a verbose response wastes tokens you’ll pay for.

Tell the AI what format you want. A few phrases that work well:

- “Reply in 3 bullet points.”

- “Give me the code only, no explanation.”

- “One-sentence answer.”

- “TL;DR version.”

The AI will follow these instructions reliably. Get in the habit of adding a format instruction to any prompt where a shorter output would serve you just as well.

Avoid Repeating Context the AI Already Has

A common habit is to re-explain the situation at the start of every message. “As I mentioned, I’m building a WordPress site and…” — if you said this three messages ago, the AI already knows. You’re burning tokens on repetition.

Trust the context window. Within a single session, the AI remembers everything you’ve discussed. You only need to repeat something if the conversation has gone long enough that you think it may have dropped from the context. Even then, a brief reference is enough: “Going back to the login page issue…” is sufficient.

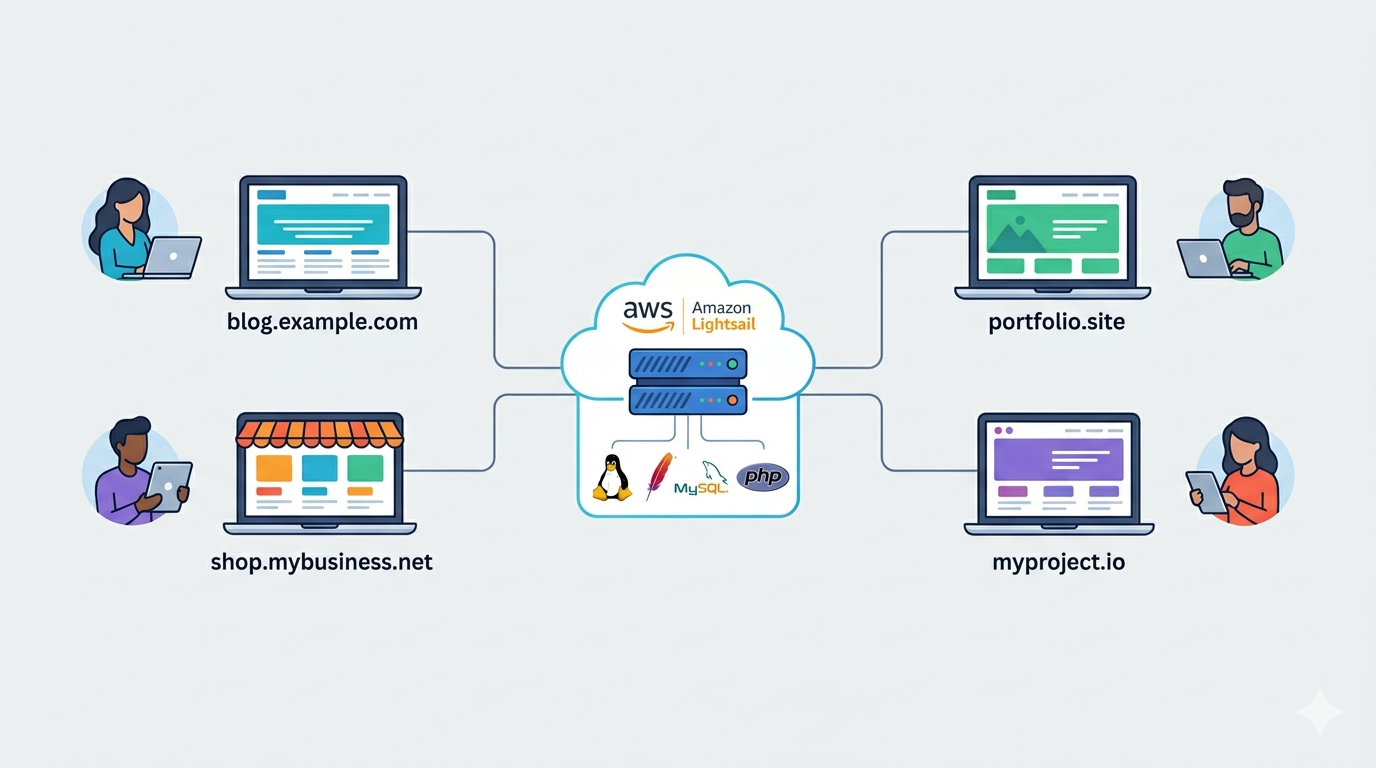

For Big Projects, Use a Folder and a memory.md File

A single context file works well for your personal preferences and standing instructions. But if you’re running a big project — a software build, a research report, a content series — you need something more structured. A dedicated project folder with a memory.md file is the cleanest solution.

Here’s how it works. Create one folder on your machine for the project. Inside it, keep all your Markdown drafts, code snippets, notes, and a single file called memory.md. This file is your shared brain across AI sessions.

After each chat session, spend two minutes updating memory.md with what was decided, what was built, and what comes next. Think of it as a handoff note from your current self to your future self — and to the AI in every future session.

A well-maintained memory.md might include:

- Decisions made — architecture choices, tone decisions, structural choices you don’t want to revisit

- Work completed — sections written, features built, tasks done

- Open questions — things still to resolve in the next session

- Key facts — names, URLs, config values, anything the AI needs to know but shouldn’t have to re-derive

When you start a new chat for that project, paste memory.md at the top. The AI instantly has everything it needs — no re-explanation, no re-reading old chats, no token waste reconstructing context.

This is far more efficient than one long continuous session. A single session that runs for days accumulates thousands of tokens of history, most of it irrelevant to where you are now. A fresh session with a lean memory.md gives the AI only what’s current and useful. You stay in control of what carries forward.

The folder structure also means all your Markdown files are in one place, ready to paste selectively into any session without hunting through chat history.

Store Reusable Instructions as Skill Files — Not in Every Chat

Here’s a pattern that most people never discover: you can store detailed task instructions in a file and load them only when needed, rather than typing or pasting the same instructions repeatedly across sessions.

Think about tasks you ask the AI to do regularly. Write a blog post. Review a document for tone. Summarise meeting notes. Analyse a competitor. Each of these probably has a specific way you like it done — a structure you prefer, a tone you want, things to include or avoid. If you retype those preferences every session, you’re burning tokens on instructions that never change.

The alternative is a skill file — a short Markdown file that captures how you want a specific task done. One file per task type, stored in your project folder (or a general ~/ai-instructions/ folder you reuse across projects).

For example:

blog-post.md— your preferred article structure, word count, tone, SEO requirementsmeeting-summary.md— the format you want: decisions, action items, open questions, ownerscompetitor-review.md— the dimensions you always compare: pricing, features, positioning, weaknesses

When you need that task done, you paste the relevant skill file once at the start of the session alongside your actual content. The AI follows your established process without you re-explaining it. The session stays short, focused, and on-brief.

This also works as a quality control tool. When a task requires precision — a proposal, a client report, a job posting — a skill file ensures the AI never misses your standards, no matter how long ago you last ran that task.

The broader principle is simple: anything you explain to an AI more than twice belongs in a file. Move it out of your chat history and into a reusable asset. Your token count shrinks, your outputs get more consistent, and you stop starting from scratch every time.

Avoid Using AI Chat for Tasks That Don’t Need It

This one sounds obvious, but it’s worth saying. Not every question needs an AI. Factual lookups, quick calculations, and simple searches are often faster — and zero tokens — when you use a search engine or a calculator directly.

Use AI for tasks that benefit from reasoning, generation, or synthesis. Use it to draft, explain, plan, debug, rewrite, and analyse. Don’t use it as a fancy Google when a plain Google would do.

Review Long Conversations Periodically

If you’re deep into a working session and notice the context getting bloated — the AI starting to lose track of earlier decisions, or responses getting slower — it’s a signal to reset.

Start a new chat and open with a fresh context summary. Pull the key decisions and facts from the old thread and write them up in 3–5 bullet points. Paste that as your opening message. You’ve now reset the token counter while preserving everything that mattered.

This is especially useful for long coding or writing projects where sessions span multiple hours.

A Quick Token-Saving Checklist

Before you send your next message, run through this:

- Is this a new topic? Start a new chat.

- Is my prompt as short as it can be while still being clear? Trim it.

- Am I pasting more than I need to? Extract only the relevant section.

- Do I need a long response, or will a short one do? Say so.

- Am I re-explaining something the AI already knows? Delete it.

- Do I actually need a Word or Excel file right now, or will Markdown do? Use Markdown until it’s final.

- Is this a big project? Maintain a project folder and update memory.md after each session.

- Am I explaining a task I’ve explained before? Put it in a skill file.

Five seconds of thought before each message can cut your token use in half without any loss in quality.

Frequently Asked Questions

What is a token in AI tools like Claude or Copilot?

A token is a small chunk of text — roughly three to four characters, or about three-quarters of a word. AI models process everything in tokens: your messages, their responses, and the entire conversation history. Most pricing and usage limits are measured in tokens rather than words or messages.

How many tokens does a typical conversation use?

A short exchange of five to ten messages typically uses between 500 and 2,000 tokens. A long, complex session with document pastes and detailed responses can easily reach 20,000–50,000 tokens. The exact count depends on message length, response length, and how much context the AI carries.

Does starting a new chat really save tokens?

Yes, significantly. Every message in an existing conversation is resent to the AI with each new exchange. A fresh chat starts the counter at zero. For a completely different topic, starting a new chat is almost always the right call.

What should I put in a CLAUDE.md or Copilot Instructions file?

Keep it short and factual. Include your role or job function, the tools and tech stack you regularly use, your preferred response format (e.g. concise vs detailed, code language preference), and any project-specific context that’s stable over time. Aim for under 300 words.

Will using fewer tokens affect the quality of AI responses?

Not if you’re deliberate about it. Cutting irrelevant context and tightening your prompts often improves response quality, because the AI focuses on what actually matters. The goal isn’t to starve the AI of information — it’s to give it the right information efficiently.

Why does generating a Word or Excel file use more tokens than Markdown?

Producing a formatted file requires the AI to build the document structure, encode formatting properties, and often execute code to assemble the output — all of which consumes tokens beyond the actual content. Plain Markdown generates the same information with none of that overhead. Use Markdown while you’re iterating, and only request a file when the output is finalised and you need a specific format for sharing or submission.

What should I put in a memory.md file for a big project?

Focus on four things: decisions already made (so the AI doesn’t re-open settled questions), work completed (so it knows where you are), open questions for the next session, and any key facts or values it needs repeatedly. Keep each entry short — one or two sentences. Update it at the end of every session, and paste it at the top of every new one. A tidy 300-word memory.md is worth more than 10,000 tokens of chat history.

What is a skill file and how is it different from a context file?

A context file (like CLAUDE.md) tells the AI about you and your project — it’s background that applies to every session. A skill file describes how to do one specific task — it’s a reusable brief you paste only when you need that task done. Think of a context file as your profile and a skill file as a standing operating procedure. Both save tokens, but in different ways: the context file replaces repeated self-introduction, while skill files replace repeated task instructions.

Should I really turn off explanations by default — won’t that make answers less useful?

Not in practice. The instruction “skip explanations unless I ask” doesn’t remove the AI’s reasoning ability — it just stops it arriving unrequested. You get the output first, which is usually all you need. When you do want the reasoning — to understand a decision, to learn something new, to sanity-check an approach — one follow-up question unlocks it instantly. The result is you consume tokens on explanations only when they have actual value to you, not on every single response.

Tokens are the unit of value in every AI interaction you have. Treat them like data on a mobile plan — you don’t need to be miserly, but a few simple habits mean you never run out at the wrong moment. Start with one change: keep each chat to one topic. Everything else will follow from there.